|

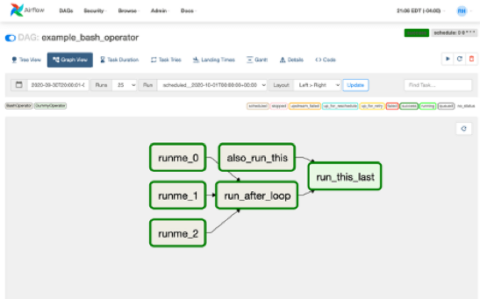

Using the Airflow API (for Airflow 2.0 or greater), as highlighted in api-dag.pyĪll of these examples should work out of the box if you are running Airflow 2.0 or greater (this repo runs Airlfow 2.1 using the Astronomer CLI).sensodyne reddit airflow run dag manually ui imdb dataset python. Dependencies are a powerful and popular Airflow feature. Using the ExternalTaskSensor, as highlighted in external-task-sensor-dag.py require including heavy WebView2 library from nuget + winforms dependency. Manage task and task group dependencies in Airflow.Using the TriggerDagRunOperator, as highlighted in trigger-dagrun-dag.py In Airflow, a DAG is a collection of tasks with defined dependencies and properties and we define them using Python programming language.If the value of flagvalue is true then all tasks need to get execute in such a way that, First task1 then parallell to (task2 & task3 together), parallell to. There are total 6 tasks are there.These tasks need to get execute based on one fields ( flagvalue) value which is coming in input json. This is similar to defining your tasks in a for loop, but instead of having the DAG file fetch the data and do that itself. How to run airflow DAG with conditional tasks.

Automatically generates a pipeline or DAG of dbt transformation models to. There are multiple ways of achieving cross-DAG dependencies in Airflow, which are each represented by an example DAG in this repo. Dynamic Task Mapping allows a way for a workflow to create a number of tasks at runtime based upon current data, rather than the DAG author having to know in advance how many tasks would be needed. A dependency on the source package is declared in this packages packages.yml. A guide with an in-depth explanation of how to implement cross-DAG dependencies can be found here. Hence, our proposal, is to define a Mediator DAG to handle dependencies and bring cohesion to a data pipeline without losing its purpose.This repo contains examples to implement cross-DAG dependencies in your Airflow DAGs.

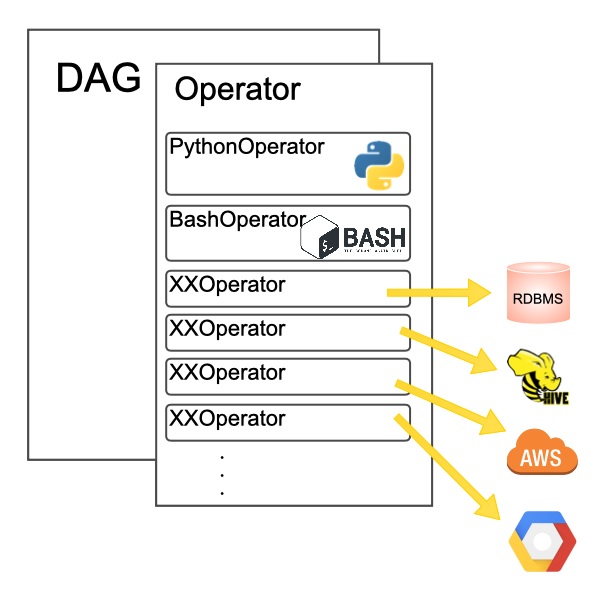

In conclusion, it is sometimes not practical to combine multiple DAGs into one. It’s funny because it comes naturally to wonder how to do that even when we are beginners. I received countless questions about DAG dependencies, is it possible How What are the best practices and the list goes on. That is, if a DAG is dependent of another, the Mediator will take care of checking and triggering the necessary objects for the data flow to continue. DAG Dependencies in Apache Airflow might be one of the most popular topics. The purpose of the loop is to iterate through a list of database table names and perform the following actions: for tablename in listoftables: if table exists in database (BranchPythonOperator) do nothing (DummyOperator) else: create table (JdbcOperator) insert records into table. The Mediator DAG in Airflow has the responsibility of looking for successfully finished DAG executions that may represent the previous step of another. We discuss: - when its useful to create dependencies between your DAGs - multiple methods for. We are creating a DAG which is the collection of our tasks with dependencies between the tasks. I am using Airflow to run a set of tasks inside for loop. However, extending interconnections between DAGs tend to reduce those enhancements, make them complex and, above all, there's no explicit built-in solution in Airflow for them. There are three basic kinds of Task: Operators, predefined task templates that you can string together quickly to build most parts of your DAGs. Tasks are arranged into DAGs, and then have upstream and downstream dependencies set between them into order to express the order they should run in. DAGs, enhances maintainability, reusability and understanding to move data from one point to another. A Task is the basic unit of execution in Airflow.

That is the reason we, at QuintoAndar, have created an intermediate DAG to handle relationships across data pipelines called Mediator, in order for them to be scalable and maintainable by any team.Īt QuintoAndar we seek automation and modularization in our data pipelines and believe that breaking them into many responsibility modules, a.k.a. Cross-DAG dependency may reduce cohesion in data pipelines and, without having an explicit solution in Airflow or in a third-party plugin, those pipelines tend to become complex to handle.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed